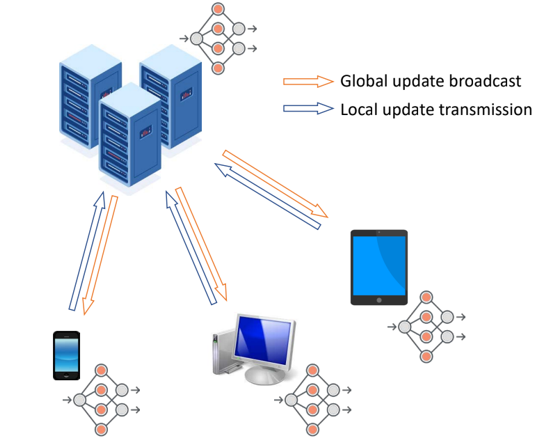

Distributed Learning

In many applications, data is distributed across clients but a central node needs to learn an aggregated model that considers all the data. One such approach to the problem is Federated Learning, where each client learns a model based on its local data and communicates with the central node. We have been looking at this problem from various aspects. One particular problem is to consider clients that have imperfect communication with the central server, and the data is distributed non-uniformly across the clients and is noisy. A second problem we are considering is adapting the amount of data that is transmitted between the clients and the central node depending on the available communication resources.

This work has been supported by NSF and ARO.