Vision System and Applications

We work on various applications of computer vision, some of which are highlighted below.

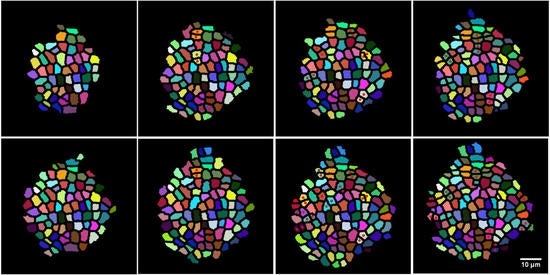

Environmental Monitoring

Computer vision and machine learning can play a very important role in many environmental applications. Examples include detecting the presence and extent of a wildfire or a prescribed fire, and understanding the vegetation cover in a certain region. Many fundamental machine learning challenges arise in these problems, e.g., there is very little supervised training data available for training the systems. Unsupervised or self-supervised learning methods need to be developed for such applications.

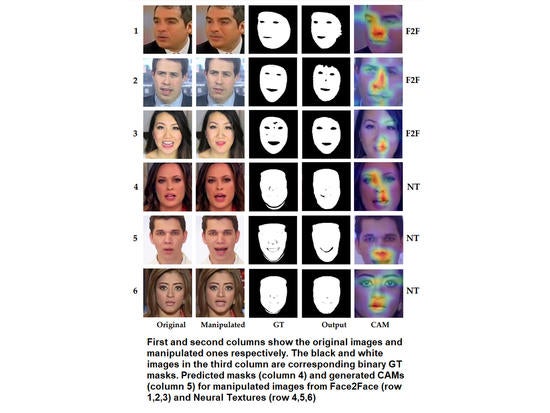

Image Forensics

The ease with which images can be forged calls for the development of methods to detect such forgeries. We have developed methods for detecting such forgeries in images. Our earliest work in this area demonstrated how LSTM networks that model the spatial structure in an image can be used to detect image manipulations like duplications [ICCV 2017, TIP 2019]. We extended this approach to detecting falsifications in research publications with a 90% accuracy in detecting duplications on a real-life dataset of retracted papers. Our latest work looks at detecting facial expression manipulations, while most of the current literature has focused on identity manipulations. This generalized framework achieves up to 99% accuracy in some datasets for detecting expression manipulations, without a fall in performance in detecting identity manipulations.

Power Thrifty Applications of DNNs on Mobile Devices

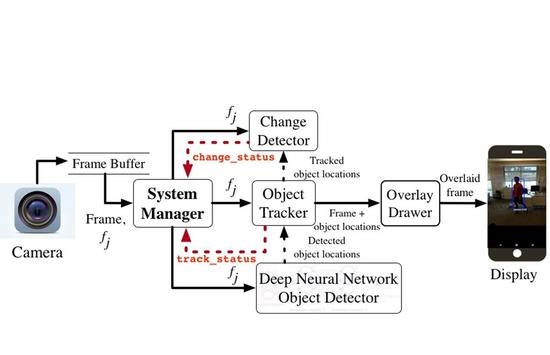

Deep networks on mobile devices can be power hungry. We have developed a framework on mobile platforms that reduces the energy drain of Deep Neural Network (DNN)–based object detection while maintaining good tracking accuracy in videos. We train a DNN model to classify and localize objects in videos but only use it on a need to basis in order to save significant energy on battery-operated smartphones. Furthermore, we implement a lightweight change detector that triggers a DNN only when it detects significant change outside currently tracked objects while ignoring camera motion noise. Finally, we develop an intelligent method to mediate between lightweight incremental tracking and DNN-based tracking by detection by using information from object tracker and change detector. The work was a finalist for the best paper award in SenSys 2019.

Face Recognition in Art

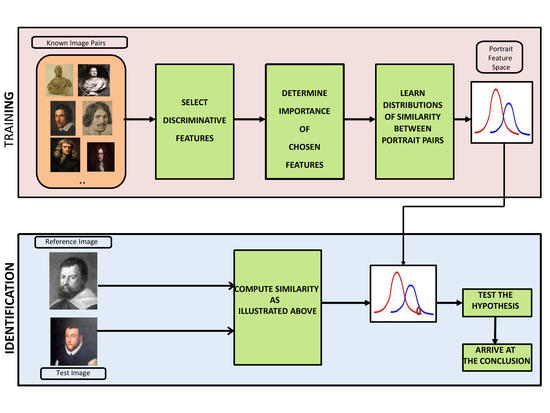

In this work, we explore the feasibility of face recognition technologies for analyzing works of portraiture, and in the process provide a quantitative source of evidence to art historians in answering many of their ambiguities concerning identity of the subject in some portraits and in understanding artists’ styles. Works of portrait art bear the mark of visual interpretation of the artist. Moreover, the number of samples available to model these effects is often limited. Based on an understanding of artistic conventions, we show how to learn and validate features that are robust in distinguishing subjects in portraits (sitters) and that are also capable of characterizing an individual artist’s style. Through statistical hypothesis tests, we analyze uncertain portraits against known identities and explain the significance of the results from an art historian’s perspective. Results are shown on our data consisting of over 270 portraits belonging largely to the Renaissance era. This work was highlighted widely in the media and included in a National Geographic documentary.